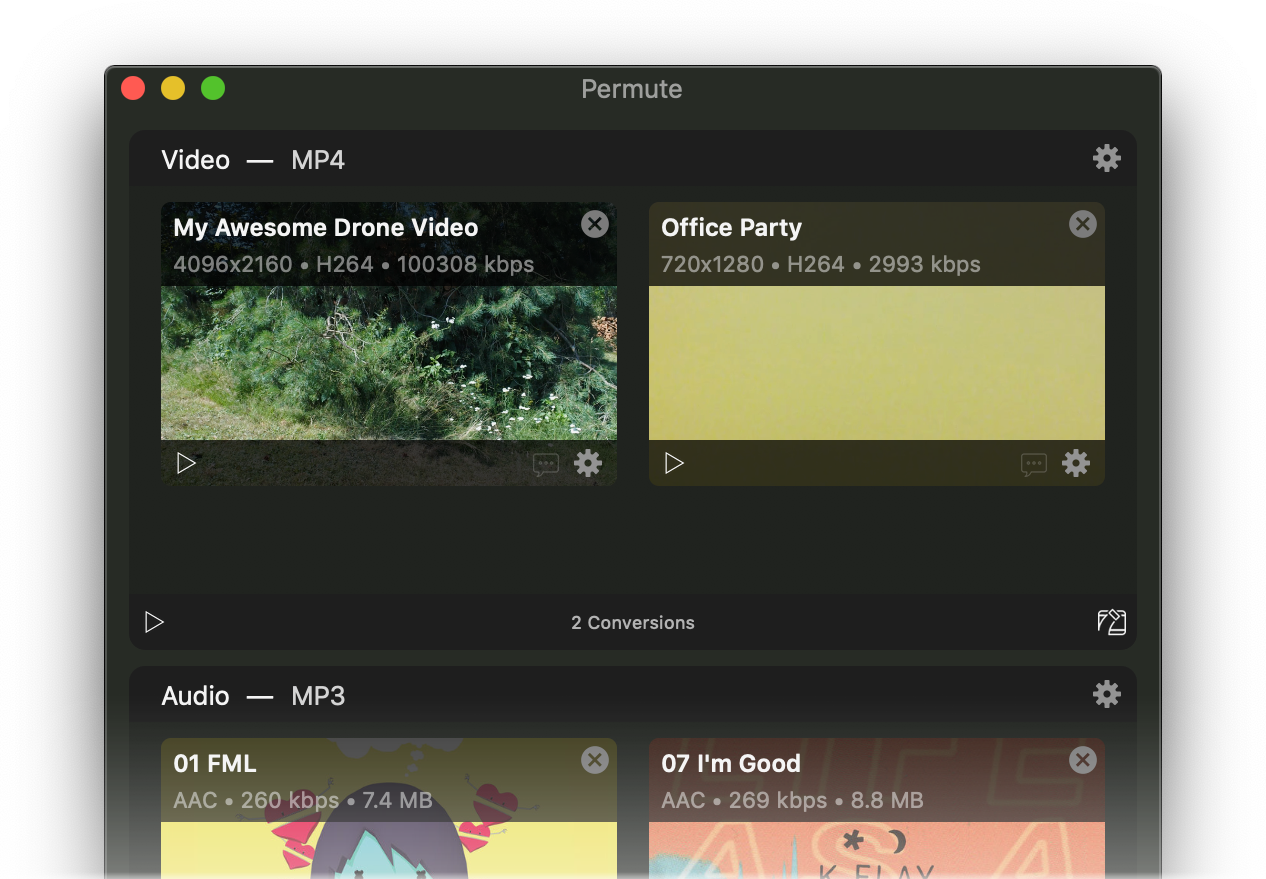

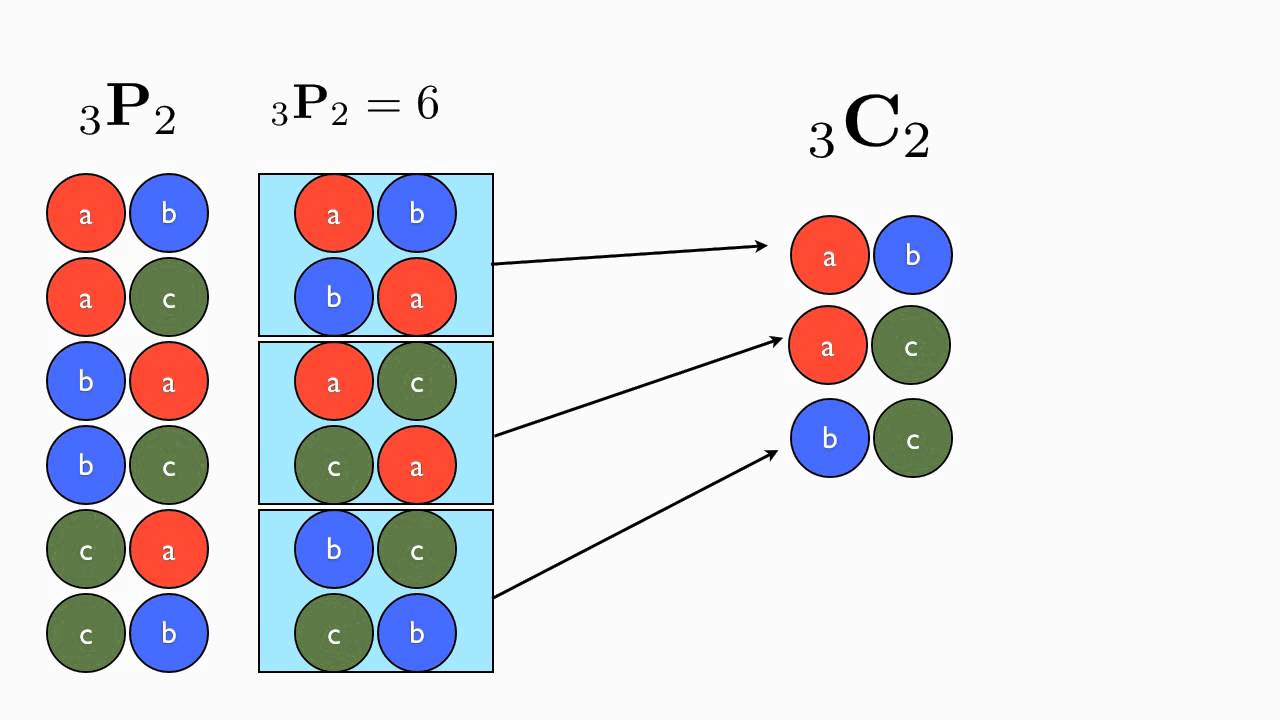

Same as the input shape, but with the dimensions re-ordered according to the specified pattern. Use the keyword argument input_shape (tuple of integers, does not include the samples axis) when using this layer as the first layer in a model. tf. fromconfig( cls, config ) Creates a layer from its config. tf.layers.permute(agrs) Parameters : dims: It is an array of integer which represents permutation pattern. Raises: ValueError: if the layer isnt yet built (in which case its weights arent yet defined). About Products For Teams Stack Overflow Public questions & answers Stack Overflow. 0,1,2,3,4 -> 1,2,3,4,0 More generally, how do I do a rotation by n positions Stack Overflow. For instance, (2, 1) permutes the first and second dimensions of the input.Īrbitrary. tf. countparams() Count the total number of scalars composing the weights. How do I permute a particular dimension of my tf tensor in the following patter: e.g. Permutation pattern, does not include the samples dimension. # now: model.output_shape = (None, 64, 10) Permutes the dimensions of the input according to a given pattern. # Launch the graph in a session with tf.Session() as sess:įor start, end in zip(range( 0, len(trX), batch_size), range(batch_size, len(trX)+ 1, batch_size)): Permutes the dimensions of the input according to a given pattern. The size of the returned tensor remains the same as that of the original. The Numpy transpose() function reverses or permutes the axes of an array. Permutation pattern, does not include the samples dimension. permutation(x) Randomly permute a sequence. Train_op = tf.train.RMSPropOptimizer( 0.001, 0.9).minimize(cost) PyTorch torch.permute () rearranges the original tensor according to the desired ordering and returns a new multidimensional rotated tensor. Permute layer is quite picky about its dims argument despite docs clearly saying dims: Tuple of integers. Py_x, state_size = model(X, W, B, lstm_size)Ĭost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(logits=py_x, labels=Y)) Mnist = input_data.read_data_sets( "MNIST_data/", one_hot= True) # Linear activation # Get the last output return tf.matmul(outputs, W) + B, lstm.state_size # State size to initialize the stat Outputs, _states = rnn.static_rnn(lstm, X_split, dtype=tf.float32) # Get lstm cell output, time_step_size (28) arrays with lstm_size output: (batch_size, lstm_size)

Lstm = rnn.BasicLSTMCell(lstm_size, forget_bias= 1.0, state_is_tuple= True) if the data is passed as a Float32Array), and changes to the data will change the tensor. For performance reasons, functions that create tensors do not necessarily perform a copy of the data passed to them (e.g. X_split = tf.split(XR, time_step_size, 0) # split them to time_step_size (28 arrays) # Each array shape: (batch_size, input_vec_size) # Make lstm with lstm_size (each input vector size) A tf.Tensor object represents an immutable, multidimensional array of numbers that has a shape and a data type. XR = tf.reshape(XT, ) # each row has input for each lstm cell (lstm_size=input_vec_size) # XR shape: (time_step_size * batch_size, input_vec_size) XT = tf.transpose(X, ) # permute time_step_size and batch_size # XT shape: (time_step_size, batch_size, input_vec_size) Test_size = 256 def init_weights (shape): return tf.Variable(tf.random_normal(shape, stddev= 0.01))ĭef model (X, W, B, lstm_size): # X, input shape: (batch_size, time_step_size, input_vec_size) # img128 or img256 (batch_size or test_size 256) # each input size = input_vec_size=lstm_size=28 # configuration variables

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed